Sweeps the codebase for known secret formats: configs, environment files, scripts, IaC templates, CI/CD pipelines, and application source.

Regex catches the obvious. LLMs catch what regex misses. Both tiers run on every file.

The secrets regex-only tools miss aren't hiding. They're obfuscated. Credentials constructed by string concatenation, passed through environment indirection, or buried in serialized data. Our two-tier scanner catches them, then classifies every confirmed secret by type, scope, and blast radius.

Beyond the obvious credential classes, the scanner catches the long tail: tokens in URLs, environment indirection, and high-entropy strings that don't match any known signature.

Personal access tokens, OAuth tokens, GitHub App credentials.

Stripe, Slack webhooks, bot tokens, and other third-party vendor keys.

Base64 and hex-encoded secrets flagged by entropy analysis when they don't match any known pattern.

Credentials embedded in URL query parameters or fragments. Easy to leak through logs and referrer headers.

Secrets set via ENV in Dockerfiles, docker-compose, and config files that ship with the image.

Secrets buried inside base64 blobs, JWT payloads, or hex-encoded config strings.

HMAC signing keys for inbound webhooks, often hardcoded in handlers and CI scripts.

HS256 secrets and RS256 private keys used to sign session tokens, leaked in test files and configs.

Fast pattern matching catches the obvious secrets in milliseconds. LLM validation catches the obfuscated ones regex misses. Every finding classified before it reaches your queue.

Sweeps the codebase for known secret formats: configs, environment files, scripts, IaC templates, CI/CD pipelines, and application source.

Every candidate is evaluated for context by an LLM. Is this a real credential, or a placeholder, test fixture, or documentation example? The model also catches non-obvious exposures that pure regex misses.

Every confirmed secret tagged by type, scope, and blast radius. You see what the credential unlocks before you triage it.

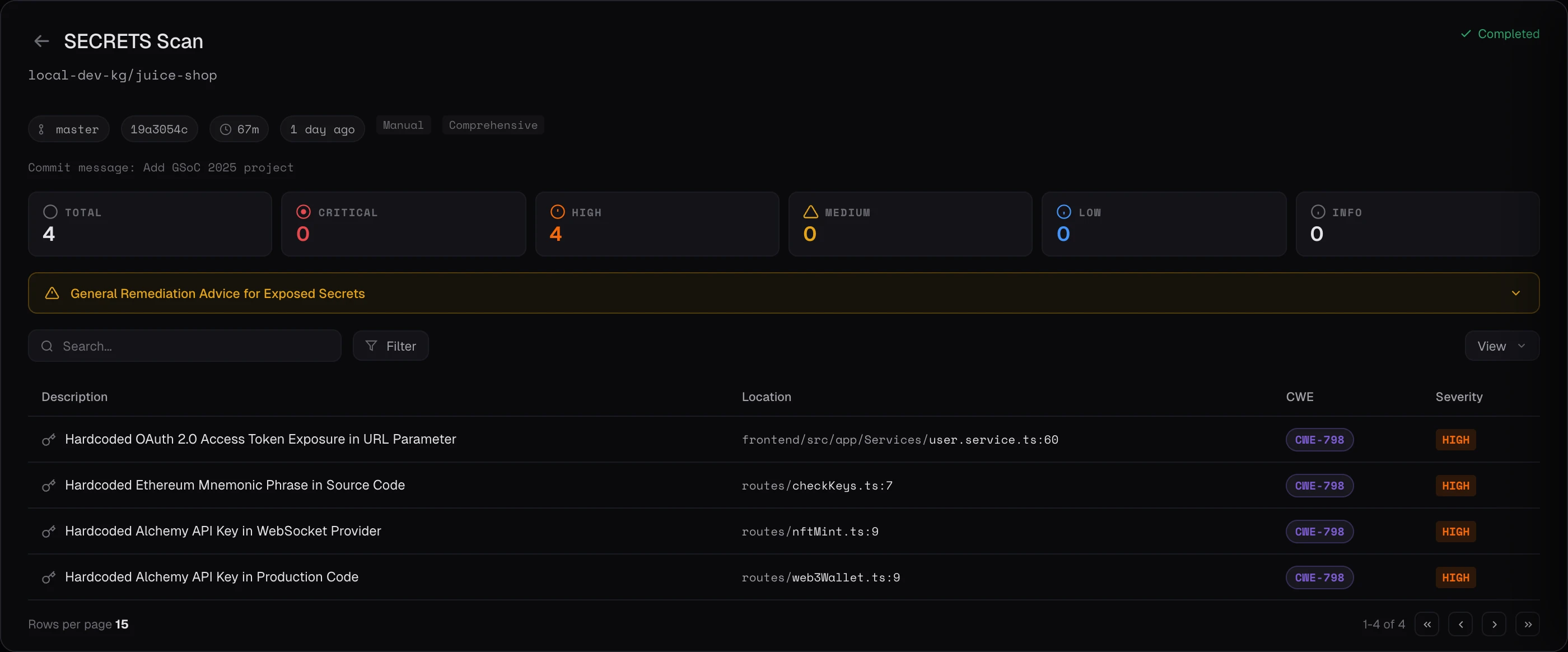

Findings are auto-classified by blast radius. Cloud credentials and private keys get the highest urgency because they unlock the most. Database credentials and API keys follow as High priority.

See classification rubric →Every scan ends with a verification phase that confirms each finding, filters out findings from test files, fixtures, and example documentation, and deduplicates overlapping issues. The result is a report you can actually act on, with a low false positive rate.

For secrets specifically, this means the LLM revisits every candidate credential in context and asks: is this a real secret, or a placeholder, test fixture, or example from documentation?

Each finding is evaluated in context against the actual code before it lands in your queue.

Findings from test files, fixtures, and documentation examples are filtered out automatically.

Overlapping findings collapse into a single actionable item, so you triage once instead of three times.

Mark a finding as a false positive once and Keygraph tags it as likely FP on every subsequent scan. Dismissed issues stop showing up.

Schedule a demo and watch Secrets Scanning run against a real repo.